Hey there! My name is Robin and I joined Whales and Games during Ludum Dare 40 to create the Music Composition and the SFX Design for our game, Jazzy Beats.

I mainly do music composition as a hobby on top of being a software engineering university student. For me, my passion are games and their creation.

In this post I’ll be going through the creation process of the music for Jazzy Beats, and in a future post I’ll go through the SFX process instead in more detail.

Materials

The list of materials I used for the music composition this time around was as following:

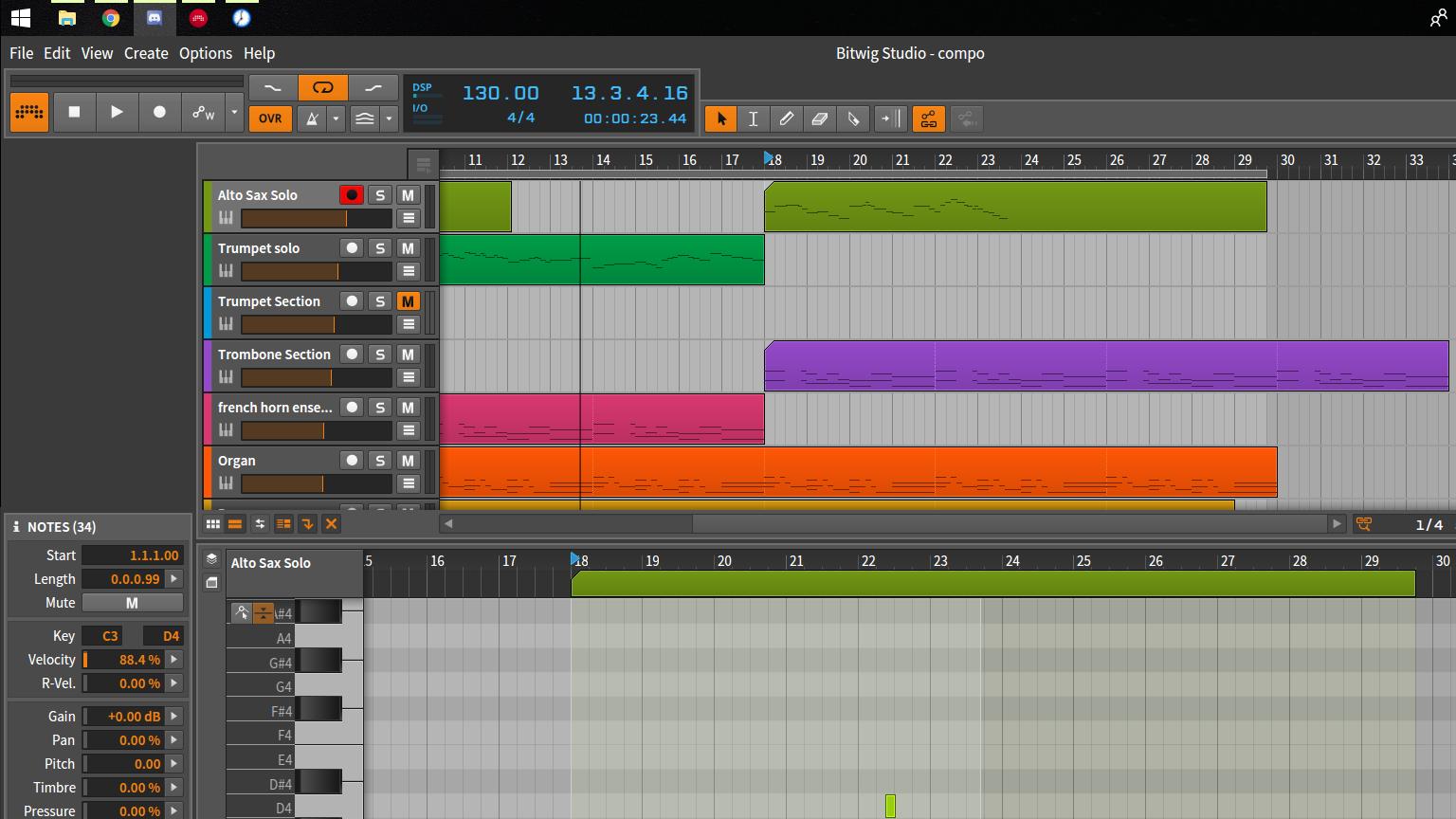

- Laptop with a DAW (Digital Audio Workstation) installed (Bitwig Studio)

- MIDI Controller: M-Audio Keystation Mini 32

- A Pair of Standard Headsets

While preparing for the jam, I had some problems with Cubase, the DAW I wanted to use for the music composition but wasn’t able to. Instead, I used my backup DAW, Bitwig Studio.

Bitwig Studio is a rather new DAW in audio scene, but it has rather some interesting features. One example of these is being able to able to open multiple projects easily at once and switch between them (almost like Chrome tabs). Each plugin (or VST) runs in its own sandbox, meaning that even if the program crashes it doesn’t crash the whole process together with it. Plus, it’s rather intuitive for beginners like me to use.

This was also my first time participating in a game jam with a MIDI controller, and I have no freaking idea why I hadn’t bought one of these before. The MIDI controller I use is a simple one, with only 32 keys, a volume knob and octave buttons. It’s light and quite small, and, as such, it’s perfect to take with me to local game jams, especially when they involve traveling to other places (picture above).

It’s also quite cheap, meaning it’s a perfect choice to get into music composition even if it’s just for something like game jams!

Workflow

Music is not something easy to create, especially during a game jam. The reason behind this is that most music is build around the visuals of something. What happens during most game’s development cycles is that the music is primarily created when the art and the base game mechanics are already in a state where they won’t change much. On the contrary, in game jams, what mostly happens is that you don’t have the time to waste waiting out for the development of the visuals.

What did I do to resolve this issue? After the game idea was settled, I waited for the first sketches of the game, whilst also searching for references. I also asked the game’s programmer and artist for music ideas based on what they’d like their characters to sound like, or asking about the games that inspired it’s visual style. In this case the visuals were highly inspired by two games, Skullgirls and The World Ends With You. I started listening to some of the music in those games. This was the music track I used the most as a reference:

After listening to some other tracks, I started setting up the instruments I would like to use, and getting some chord progressions together alongside them. After getting some progressions up I started searching for some non-game soundtracks to see if I could find any interesting ideas in BPM’s, beats, and even in the combination of instruments.

After I got some ideas, I started changing the chord progressions to have more rhythm together with the base/background instruments. The music composition kept constantly changing as new art and gameplay started emerging.

At the end of the first day I had the game’s music created. Even though it was done, during the next days I needed to continue adapting the music to fit the visuals and the sound effects.

To continue making those changes, you really need to find a good workflow that allows you to easily make changes, mix and master the tracks. Always be ready for more changes. The perfect workflow was something that I unfortunately wasn’t able to achieve yet.

You can listen to the game’s soundtrack here on my personal soundcloud account and if you’d like to give the game a try and have a listen to the music compositions on the game itself, give a look at the game over at our game page! Thank you!

You can find the original blog post on Ludum dare site here.